OpenAI has announced the expansion of ChatGPT’s capabilities, enabling it to process voice and image inputs. This development signifies a shift towards a more intuitive interface, allowing users to engage in voice conversations and visually show ChatGPT their queries.

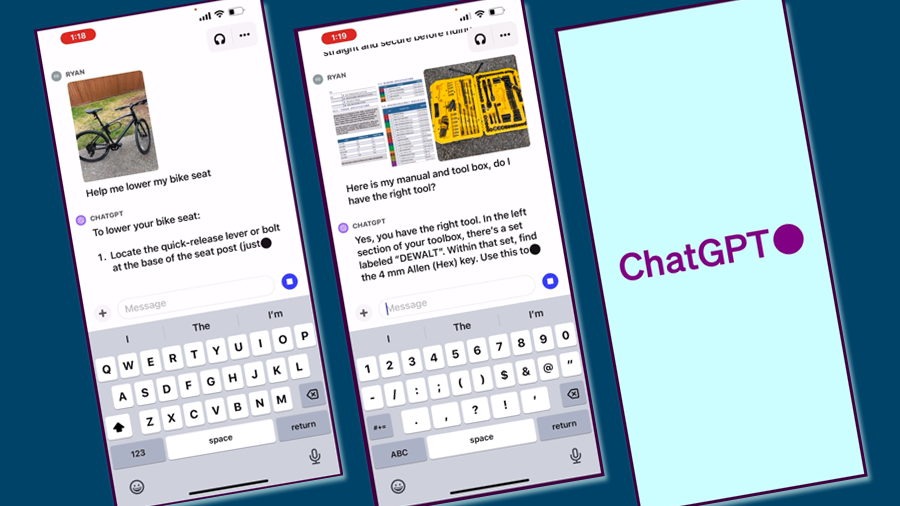

The new features in ChatGPT provide users with diverse ways to utilise the tool. For instance, users can capture a photo of a landmark and initiate a live conversation about its significance. At home, they can take pictures of their refrigerator contents to brainstorm meal ideas, and even seek step-by-step recipes. Additionally, aiding a child with their homework becomes easier by photographing the problem, highlighting the specific issue, and receiving guidance from ChatGPT.

OpenAI plans to introduce these voice and image features to Plus and Enterprise users within the forthcoming two weeks. While the voice feature will be accessible on both iOS and Android platforms, the image capability will be available across all platforms.

Engaging Voice Conversations with ChatGPT

Users can now converse with ChatGPT using voice, making it convenient to interact on the move, request stories, or resolve debates. To activate this feature, users need to navigate to Settings → New Features on the mobile application and enable voice conversations. They can then select from five distinct voices for their assistant.

This voice feature is driven by a new text-to-speech model, which can produce human-like audio from text and a brief sample of speech. OpenAI has partnered with professional voice actors to develop these voices and employs Whisper, their open-source speech recognition system, to convert spoken words into text.

Image Discussions with ChatGPT

ChatGPT now has the ability to analyse one or more images. This can assist users in various scenarios, such as troubleshooting equipment issues, planning meals based on available ingredients, or examining intricate graphs for work data. The mobile app also offers a drawing tool to highlight specific areas of an image for ChatGPT’s attention.

The image comprehension is facilitated by multimodal GPT-3.5 and GPT-4 models. These models apply their linguistic reasoning abilities to various images, including photographs, screenshots, and documents with both text and visuals.

Gradual Deployment for Safety

OpenAI emphasises the importance of a cautious and phased release of these advanced features. The organisation aims to ensure the safety and utility of AGI (Artificial General Intelligence) and believes in a gradual release strategy. This approach allows for continuous refinement and risk mitigation, preparing users for more potent systems in the future.

Voice Technology and Its Implications

The innovative voice technology can produce authentic-sounding synthetic voices from brief real speech samples. While this offers numerous creative and accessibility applications, it also poses risks, such as potential misuse by malicious actors. OpenAI has collaborated with voice actors and companies like Spotify, which is leveraging this technology for their Voice Translation feature.

Vision-based models come with their set of challenges, from potential misinterpretations to the model’s reliance on high-stakes domains. OpenAI has conducted extensive testing and research to ensure responsible usage.

OpenAI has collaborated with Be My Eyes, an app designed for the visually impaired, to understand the potential uses and limitations of the vision feature. Feedback from users has been instrumental in refining the tool, ensuring it remains beneficial while respecting privacy.

OpenAI acknowledges that while ChatGPT is proficient in certain areas, it has its limitations. The organisation advises users to exercise caution, especially when relying on the model for specialised topics or non-English transcriptions.

OpenAI is enthusiastic about offering the voice and image features to a broader user base, including developers, following the initial rollout to Plus and Enterprise users.

Read the full story here.

Whether you want to learn how to use LinkedIn, X or Facebook for marketing, or need to brush up on business skills like leadership, presentation skills or managing meetings, you will find something to enhance your professional skills with these on-demand courses.

Whether you want to learn how to use LinkedIn, X or Facebook for marketing, or need to brush up on business skills like leadership, presentation skills or managing meetings, you will find something to enhance your professional skills with these on-demand courses.